来自今天的爱可可AI前沿推介

[CL] ALERT: Adapting Language Models to Reasoning Tasks

P Yu, T Wang, O Golovneva, B Alkhamissy, G Ghosh, M Diab, A Celikyilmaz

[Meta AI]

ALERT: 用语言模型自适应推理任务

要点:

-

语言模型可以在少样本学习的情况下执行复杂任务; -

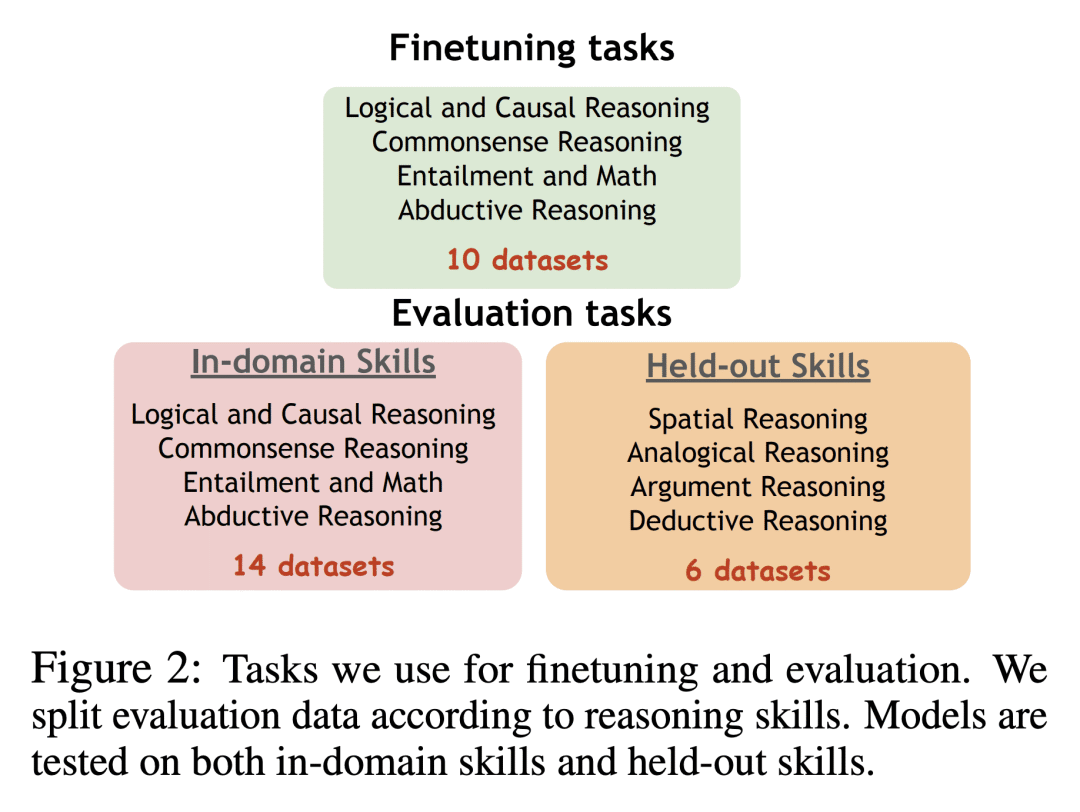

ALERT是一个基准,用于评估语言模型的细粒度推理能力,覆盖20多个数据集,涵盖10种不同的推理技能; -

微调可以提高语言模型的某些推理能力,但也可能导致它们过拟合提示模板,降低鲁棒性。

摘要:

目前的大型语言模型在需要逐步推理的复杂任务上可以表现得相当好,而这些任务需要通过少样本学习来完成。这些模型是在应用它们在预训练期间学到的推理技能,并在训练上下文之外进行推理,还是它们只是以更精细的粒度记忆其训练语料,并学会更好地理解上下文?为了区分这些可能性,本文提出ALERT,这是一个评估语言模型推理能力的基准和分析套件,在需要推理能力解决的复杂任务上,对预训练和微调的模型进行比较。ALERT提供了一个测试平台,来评估任意语言模型的细粒度推理技能,它跨越了20多个数据集,涵盖了10种不同的推理技能。用ALERT来进一步研究微调的作用。通过广泛的实证分析,本文发现,与预训练状态相比,语言模型在微调阶段学习了更多的推理技能,如文本蕴含关系、归纳推理和类比推理。本文还发现,当语言模型被微调时,它们倾向于过适应提示模板,这损害了模型的鲁棒性,导致泛化问题。

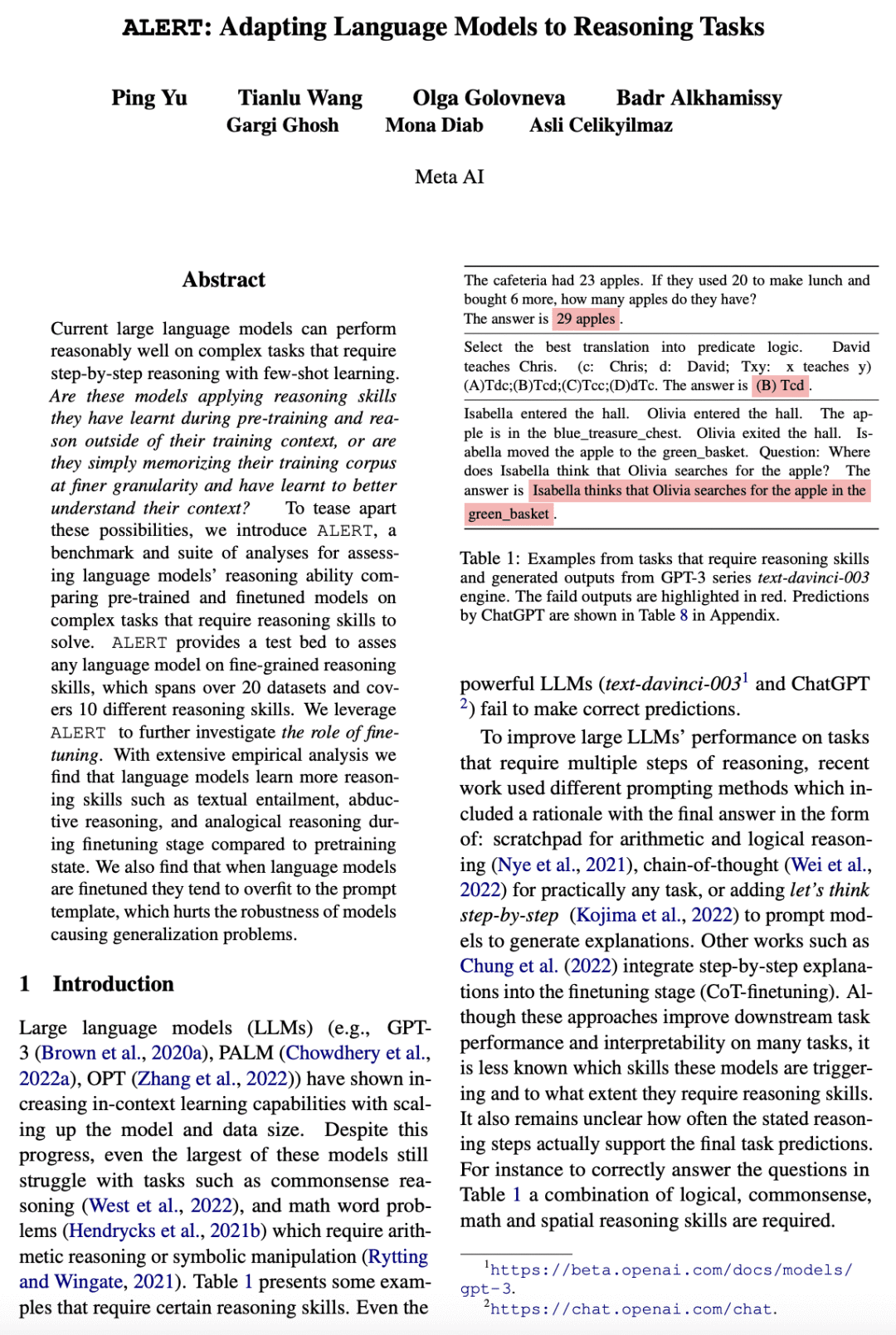

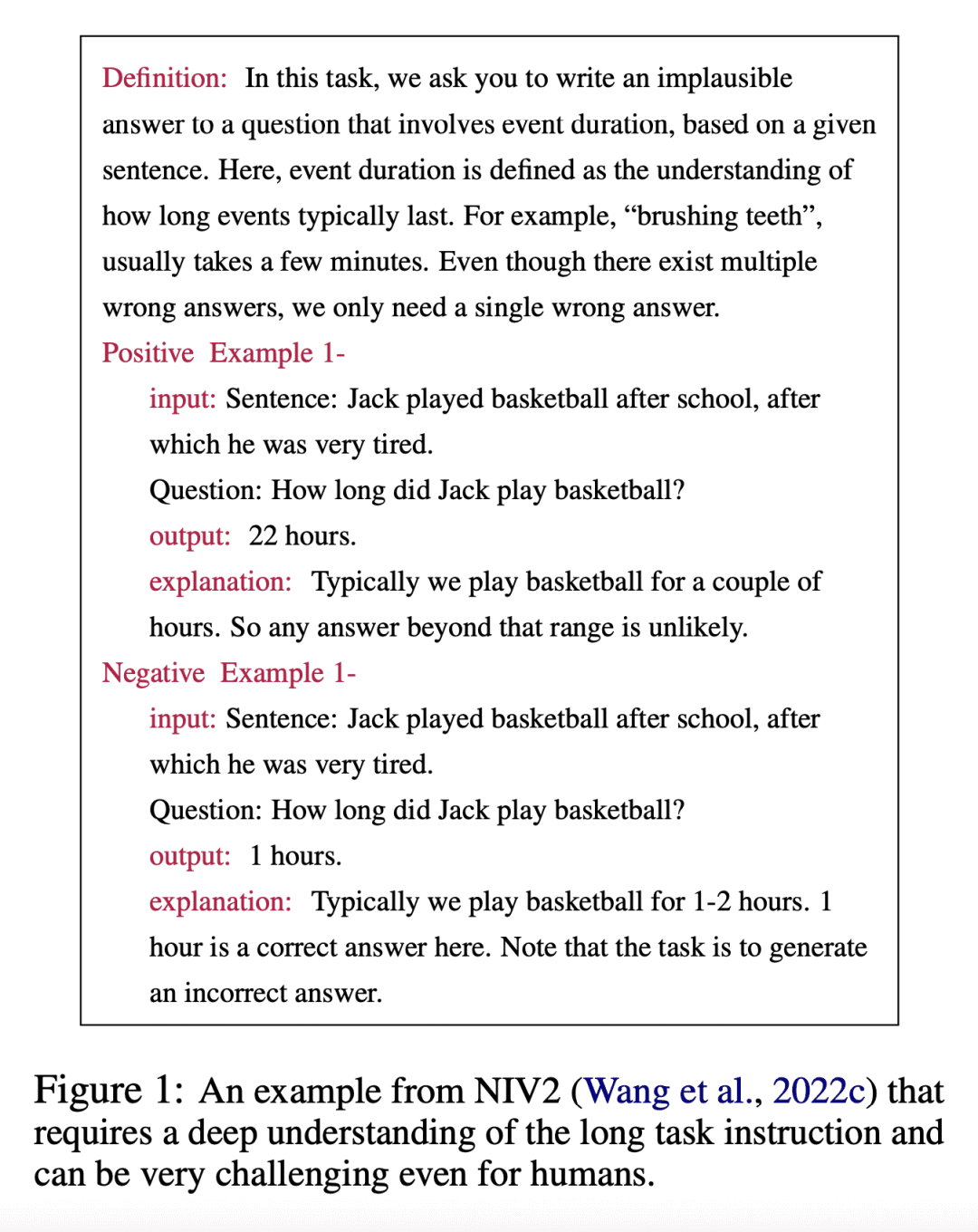

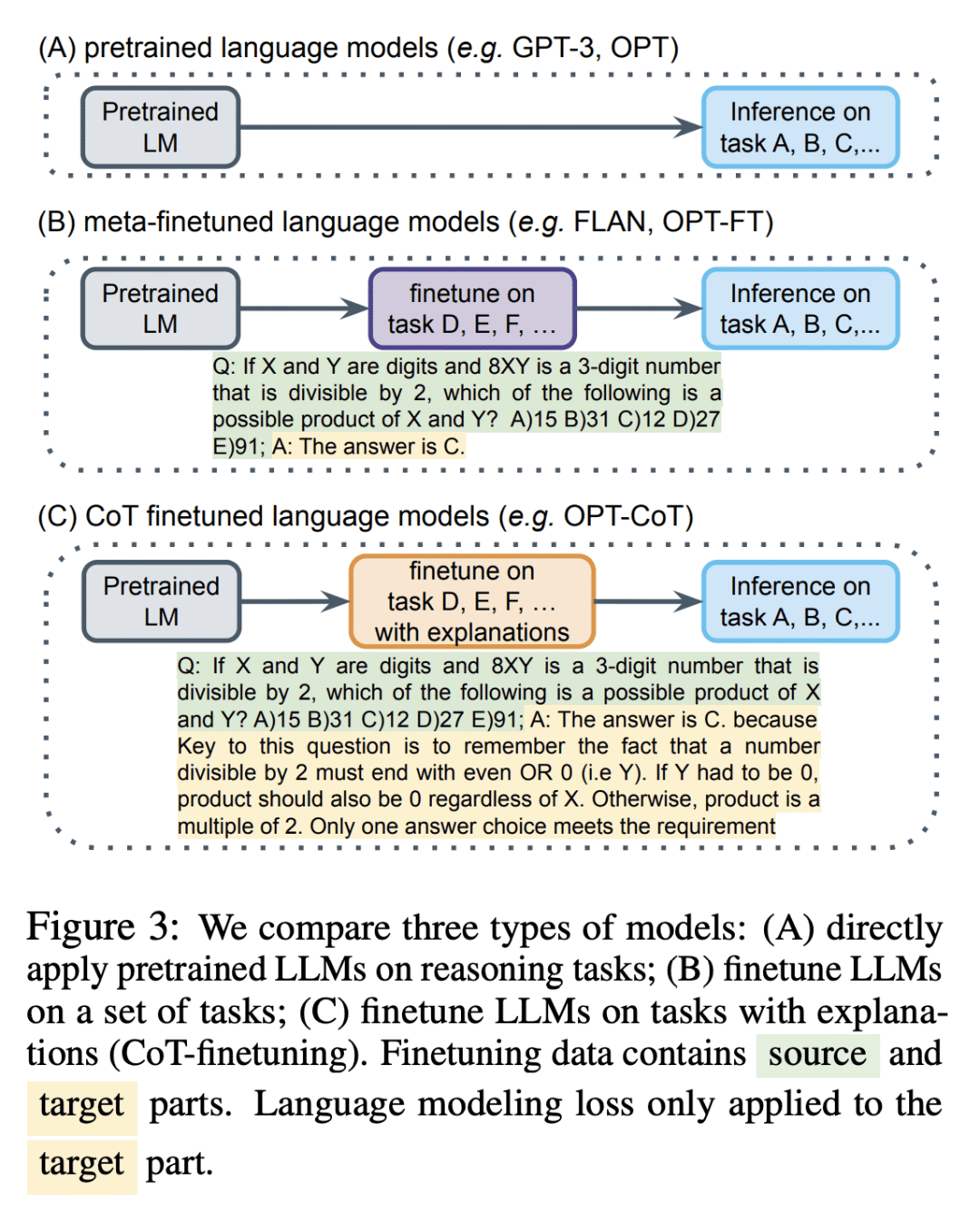

Current large language models can perform reasonably well on complex tasks that require step-by-step reasoning with few-shot learning. Are these models applying reasoning skills they have learnt during pre-training and reason outside of their training context, or are they simply memorizing their training corpus at finer granularity and have learnt to better understand their context? To tease apart these possibilities, we introduce ALERT, a benchmark and suite of analyses for assessing language models' reasoning ability comparing pre-trained and finetuned models on complex tasks that require reasoning skills to solve. ALERT provides a test bed to asses any language model on fine-grained reasoning skills, which spans over 20 datasets and covers 10 different reasoning skills. We leverage ALERT to further investigate the role of finetuning. With extensive empirical analysis we find that language models learn more reasoning skills such as textual entailment, abductive reasoning, and analogical reasoning during finetuning stage compared to pretraining state. We also find that when language models are finetuned they tend to overfit to the prompt template, which hurts the robustness of models causing generalization problems.

论文链接:https://arxiv.org/abs/2212.08286

内容中包含的图片若涉及版权问题,请及时与我们联系删除

评论

沙发等你来抢