LG - 机器学习 CV - 计算机视觉 CL - 计算与语言 AS - 音频与语音 RO - 机器人

| 转自爱可可爱生活

1、[LG] Multitask Prompted Training Enables Zero-Shot Task Generalization

V Sanh, A Webson, C Raffel, S H. Bach...

[Hugging Face & Brown University & BigScience]

基于多任务提示训练的零样本任务泛化。最近,大型语言模型被证明可以在一系列不同的任务上达到合理的零样本泛化。有人假设这是语言模型训练中隐性多任务学习的结果。那么,零样本泛化是否可以由显性多任务学习直接引发?为了大规模测试这个问题,本文开发了一个系统,可以轻松地将一般的自然语言任务映射为人类可读的提示形式。转换了一组大规模的有监督数据集,每个数据集都有使用不同自然语言的多种提示。这些带提示数据集允许对一个模型执行完全未见过的自然语言任务的能力进行基准测试。在这个涵盖各种任务的多任务混合集上对一个预训练的编-解码器模型进行微调。该模型在几个标准数据集上获得了强大的零样本性能,往往超过了高达16倍于其规模的模型。所提出方法在BIG-Bench基准的一个任务子集上获得了强大的性能,超过了高达6倍于其规模的模型。

Large language models have recently been shown to attain reasonable zero-shot generalization on a diverse set of tasks (Brown et al., 2020). It has been hypothesized that this is a consequence of implicit multitask learning in language model training (Radford et al., 2019). Can zero-shot generalization instead be directly induced by explicit multitask learning? To test this question at scale, we develop a system for easily mapping general natural language tasks into a human-readable prompted form. We convert a large set of supervised datasets, each with multiple prompts using varying natural language. These prompted datasets allow for benchmarking the ability of a model to perform completely unseen tasks specified in natural language. We fine-tune a pretrained encoder-decoder model (Raffel et al., 2020; Lester et al., 2021) on this multitask mixture covering a wide variety of tasks. The model attains strong zero-shot performance on several standard datasets, often outperforming models up to 16× its size. Further, our approach attains strong performance on a subset of tasks from the BIG-Bench benchmark, outperforming models up to 6× its size. All prompts and trained models are available at github.com/bigscience-workshop/promptsource/ and huggingface.co/bigscience/T0pp.

https://weibo.com/1402400261/KDo46rXeE

2、[CV] Non-deep Networks

A Goyal, A Bochkovskiy, J Deng, V Koltun

[Princeton University & Intel Labs]

非深度网络。深度是深度神经网络的标志。但更深的深度意味着更多的顺序计算和更高的延迟。这就提出了一个问题——有可能建立高性能的"非深度"神经网络吗?本文表明,这是可能的。采用并行子网络,而不是一层一层堆叠。有助于有效减少深度,同时保持高性能。利用并行子结构,一个深度仅为12的网络可以在ImageNet上达到80%以上的最高准确率,在CIFAR10上达到96%,在CIFAR100上达到81%。一个低深度(12)的骨干网络,可以在MS-COCO上达到48%的AP。本文分析了所设计的扩展规则,并展示了如何在不改变网络深度的情况下提高性能。提供了一个概念证明,说明非深度网络可以用来建立低延迟的识别系统。

Depth is the hallmark of deep neural networks. But more depth means more sequential computation and higher latency. This begs the question – is it possible to build high-performing “non-deep” neural networks? We show that it is. To do so, we use parallel subnetworks instead of stacking one layer after another. This helps effectively reduce depth while maintaining high performance. By utilizing parallel substructures, we show, for the first time, that a network with a depth of just 12 can achieve top-1 accuracy over 80% on ImageNet, 96% on CIFAR10, and 81% on CIFAR100. We also show that a network with a low-depth (12) backbone can achieve an AP of 48% on MS-COCO. We analyze the scaling rules for our design and show how to increase performance without changing the network’s depth. Finally, we provide a proof of concept for how non-deep networks could be used to build low-latency recognition systems. Code is available at https://github.com/imankgoyal/NonDeepNetworks.

https://weibo.com/1402400261/KDo7iafhJ

3、[LG] How to train RNNs on chaotic data?

Z Monfared, J M. Mikhaeil, D Durstewitz

[Heidelberg University]

如何用混沌数据训练RNN?递归神经网络(RNN)是广泛使用的机器学习工具,用于对顺序和时间序列数据进行建模。众所周知,RNN很难训练,因为其损失梯度在时间上反向传播,在训练中容易饱和或发散,就是所谓的梯度爆炸和消失的问题。之前解决这个问题的方法要么是建立在相当复杂的、专门设计的带有门控缓冲器的结构上,要么是最近施加了一些限制,确保收敛到一个固定点或限制递归矩阵(的特征谱)。然而,这种约束对RNN的表现力有严重的限制。基本的内在动态,如多态性或混沌性被禁用。这与自然界和社会中遇到的许多(如果不是大多数)时间序列的混沌性质本质上是不一致的。本文通过将RNN训练期间的损失梯度与RNN生成的轨道的Lyapunov谱联系起来,对这个问题进行了全面的理论处理。从数学上证明,产生稳定平衡或循环行为的RNN具有有界梯度,而具有混乱动态的RNN的梯度总是发散。基于这些分析和见解,为混沌数据提供了一种有效而简单的训练技术,并指导如何根据Lyapunov谱选择相关的超参数。

Recurrent neural networks (RNNs) are wide-spread machine learning tools for modeling sequential and time series data. They are notoriously hard to train because their loss gradients backpropagated in time tend to saturate or diverge during training. This is known as the exploding and vanishing gradient problem. Previous solutions to this issue either built on rather complicated, purpose-engineered architectures with gated memory buffers, or more recently imposed constraints that ensure convergence to a fixed point or restrict (the eigenspectrum of) the recurrence matrix. Such constraints, however, convey severe limitations on the expressivity of the RNN. Essential intrinsic dynamics such as multistability or chaos are disabled. This is inherently at disaccord with the chaotic nature of many, if not most, time series encountered in nature and society. Here we offer a comprehensive theoretical treatment of this problem by relating the loss gradients during RNN training to the Lyapunov spectrum of RNN-generated orbits. We mathematically prove that RNNs producing stable equilibrium or cyclic behavior have bounded gradients, whereas the gradients of RNNs with chaotic dynamics always diverge. Based on these analyses and insights, we offer an effective yet simple training technique for chaotic data and guidance on how to choose relevant hyperparameters according to the Lyapunov spectrum.

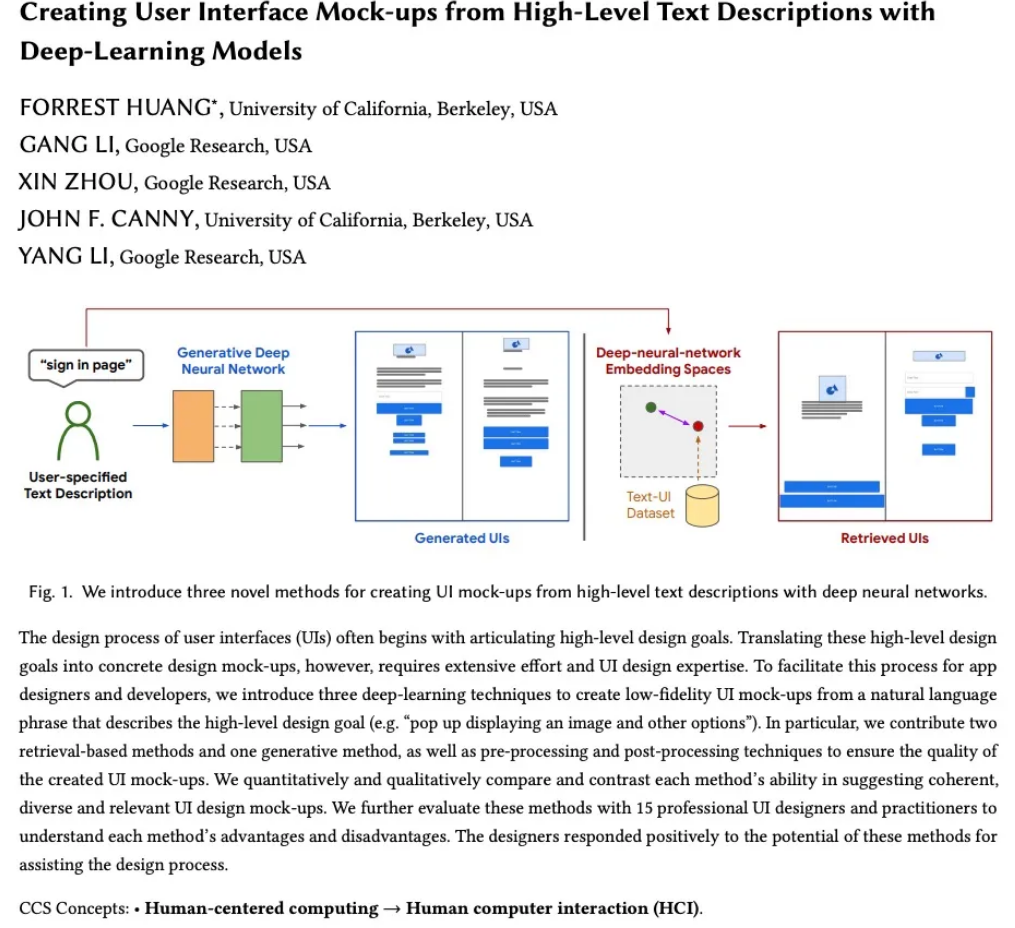

4、[AI] Creating User Interface Mock-ups from High-Level Text Descriptions with Deep-Learning Models

F Huang, G Li, X Zhou, J F. Canny, Y Li

[UC Berkeley & Google Research]

用深度学习从高层次文本描述中创建用户界面原型。用户界面(UI)设计过程通常从阐述高层次设计目标开始。然而,将这些高层次设计目标转化为具体设计模型,需要大量的努力和UI设计的专业知识。为促进应用程序设计师和开发人员的这一过程,本文提出三种深度学习技术,从描述高层次设计目标的自然语言短语(如"弹出显示图片和其他选项")中创建低保真用户界面模型。提供了两种基于检索的方法和一种生成的方法,以及预处理和后处理技术,以确保所创建的UI模型的质量。从数量和质量上比较和对比了每种方法在提供连贯、多样和相关的用户界面设计模型方面的能力。与15位专业的UI设计师和从业者一起进一步评估这些方法,以了解每种方法的优势和劣势。设计师们对这些方法在协助设计过程中的潜力给予了积极的回应。

The design process of user interfaces (UIs) often begins with articulating high-level design goals. Translating these high-level design goals into concrete design mock-ups, however, requires extensive effort and UI design expertise. To facilitate this process for app designers and developers, we introduce three deep-learning techniques to create low-fidelity UI mock-ups from a natural language phrase that describes the high-level design goal (e.g. “pop up displaying an image and other options”). In particular, we contribute two retrieval-based methods and one generative method, as well as pre-processing and post-processing techniques to ensure the quality of the created UI mock-ups. We quantitatively and qualitatively compare and contrast each method’s ability in suggesting coherent, diverse and relevant UI design mock-ups. We further evaluate these methods with 15 professional UI designers and practitioners to understand each method’s advantages and disadvantages. The designers responded positively to the potential of these methods for assisting the design process.

https://weibo.com/1402400261/KDocYoPVO

5、[CL] Semantics-aware Attention Improves Neural Machine Translation

A Slobodkin, L Choshen, O Abend

[The Hebrew University of Jerusalem]

基于语义感知注意力改善神经机器翻译。将句法结构整合到Transformer机器翻译已显示出积极的成果,但还没有工作试图对语义结构进行整合。本文提出两种将语义信息注入Transformer的新型无参数方法,这两种方法都依赖于对(部分)注意力头的语义感知掩码。其中一种方法是通过一个场景感知自注意力(SASA)头在编码器上操作。另一种是在解码器上,通过一个场景感知交叉注意力(SACrA)头。在四种语言对中,与vanilla Transformer和句法感知模型相比,有持续的改进。在某些语言对中同时使用语义和句法结构时,有额外的收益。

The integration of syntactic structures into Transformer machine translation has shown positive results, but to our knowledge, no work has attempted to do so with semantic structures. In this work we propose two novel parameter-free methods for injecting semantic information into Transformers, both rely on semantics-aware masking of (some of) the attention heads. One such method operates on the encoder, through a Scene-Aware Self-Attention (SASA) head. Another on the decoder, through a Scene-Aware Cross-Attention (SACrA) head. We show a consistent improvement over the vanilla Transformer and syntax-aware models for four language pairs. We further show an additional gain when using both semantic and syntactic structures in some language pairs.

https://weibo.com/1402400261/KDofAxQwE

另外几篇值得关注的论文:

[CV] Meta Internal Learning

元内在学习

R Bensadoun, S Gur, T Galanti, L Wolf

[Tel Aviv University]

https://weibo.com/1402400261/KDojivRre

[LG] Crystal Diffusion Variational Autoencoder for Periodic Material Generation

面向周期材料生成的晶体扩散变分自编码器

T Xie, X Fu, O Ganea, R Barzilay, T Jaakkola

[MIT]

https://weibo.com/1402400261/KDokHETz1

[LG] Averting A Crisis In Simulation-Based Inference

避免基于仿真推理中的危机

J Hermans, A Delaunoy, F Rozet, A Wehenkel, G Louppe

[University of Liege]

https://weibo.com/1402400261/KDom4tlZO

[AS] Neural Dubber: Dubbing for Silent Videos According to Scripts

神经(网络)配音器:根据脚本为无声视频配音

C Hu, Q Tian, T Li, Y Wang, Y Wang, H Zhao

[Tsinghua University & ByteDance]

https://weibo.com/1402400261/KDonHz1C6

内容中包含的图片若涉及版权问题,请及时与我们联系删除

评论

沙发等你来抢