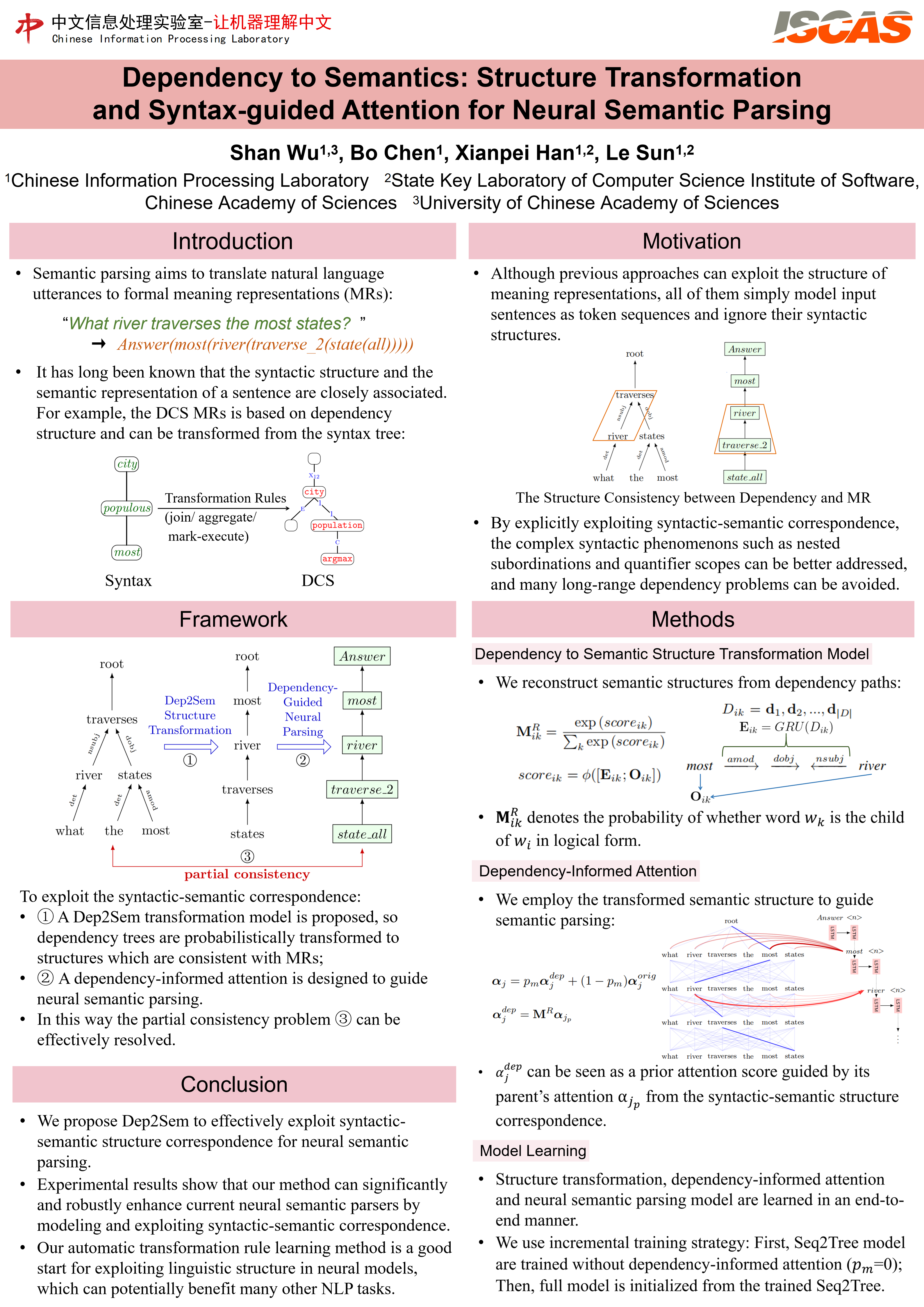

It has long been known that the syntactic structure and the semantic representation of a sentence are closely associated. However, it is still a hard problem to exploit the syntactic-semantic correspondence in end-to-end neural semantic parsing, mainly due to the partial consistency between their structures. In this paper, we propose a neural dependency to semantics transformation model – Dep2Sem, which can effectively learn the structure correspondence between dependency trees and formal meaning representations. Based on Dep2Sem, a dependency-informed attention mechanism is proposed to exploit syntactic structure for neural semantic parsing. Experiments on GEO, JOBS, and ATIS benchmarks show that our approach can signifificantly enhance the performance of neural semantic parsers.

内容中包含的图片若涉及版权问题,请及时与我们联系删除

评论

沙发等你来抢