来自今天的爱可可AI前沿推介

[CV] Uncovering the Disentanglement Capability in Text-to-Image Diffusion Models

Q Wu, Y Liu, H Zhao, A Kale, T Bui, T Yu, Z Lin, Y Zhang, S Chang

[UC, Santa Barbara & Adobe Research & MIT-IBM Watson AI Lab]

文本到图像扩散模型解缠能力挖掘

要点:

-

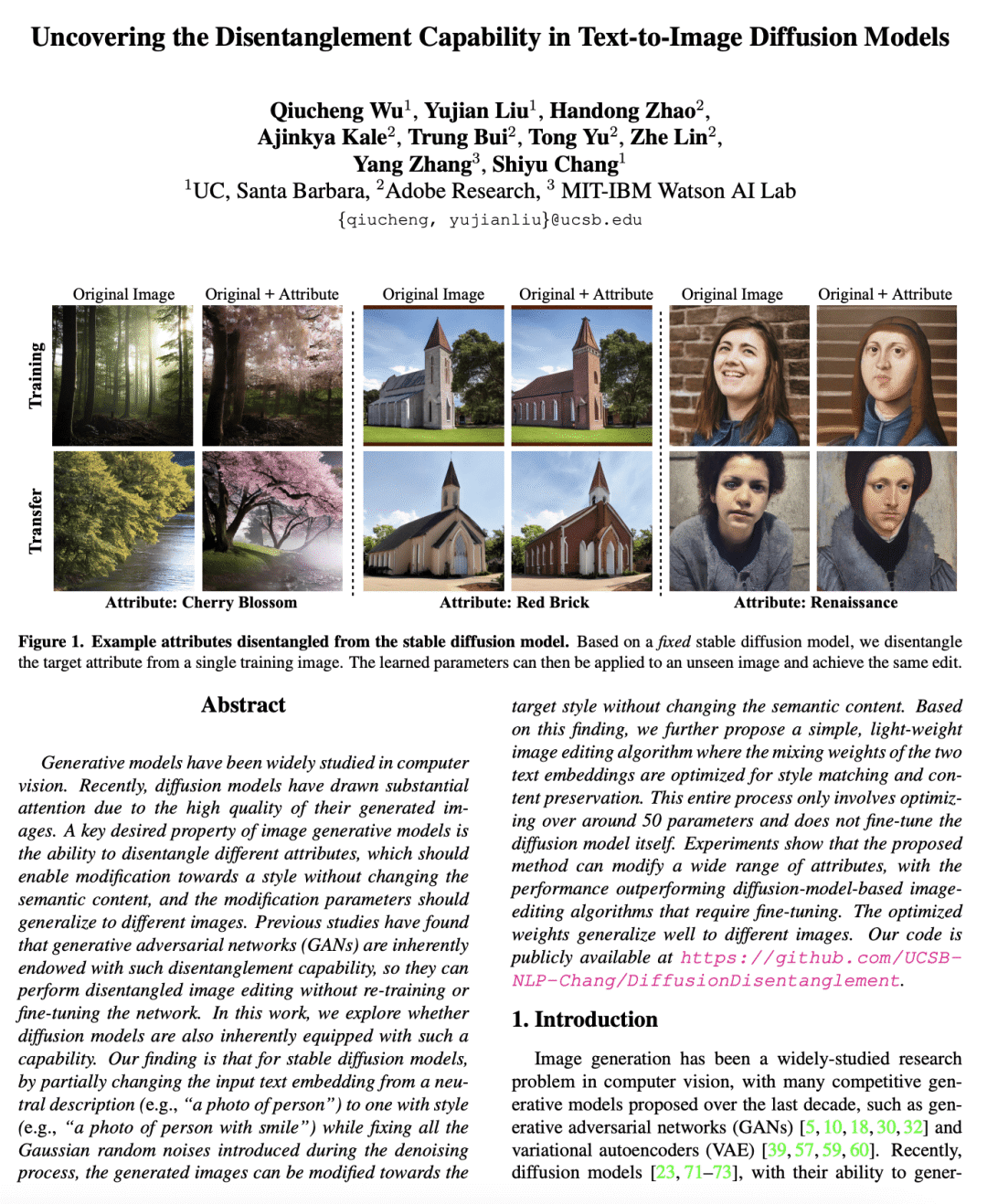

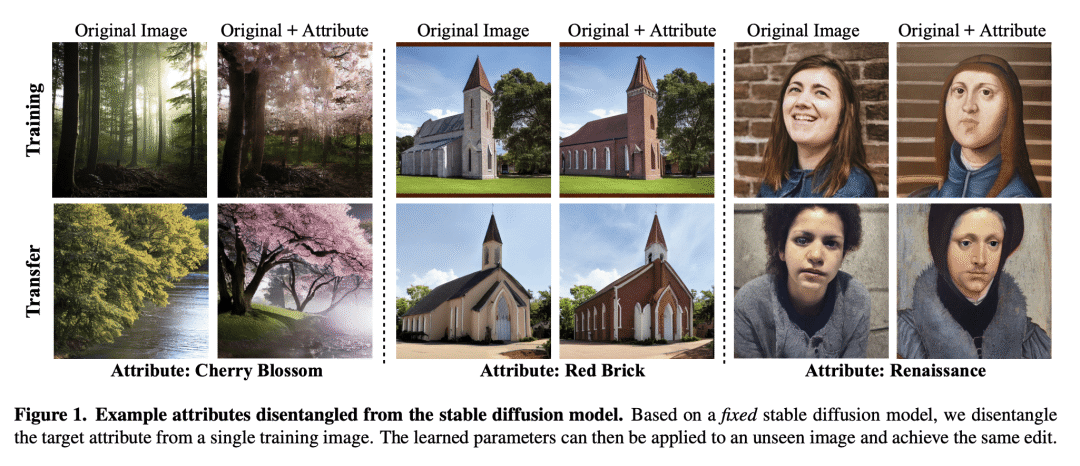

对 stable diffusion 模型的解缠特性进行研究,发现 stable diffusion 模型本身具有解缠不同属性的能力,从而可以在不改变语义内容的情况下将图像修改为其他样式; -

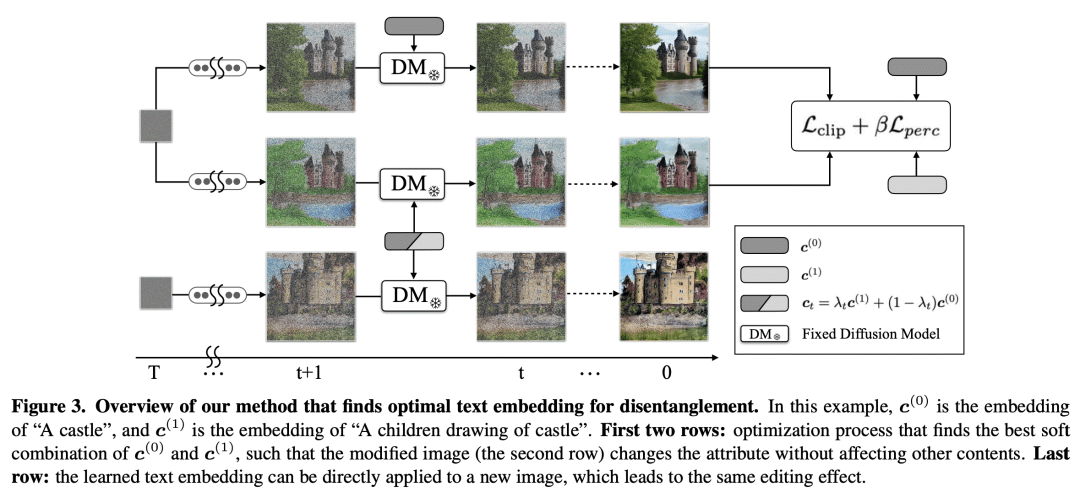

提出一种简单轻量的解缠算法,通过优化两个文本嵌入的混合权重,来进行样式匹配和内容保持,而不需要微调扩散模型,只需优化大约50个参数; -

证明该方法可以修改广泛的属性,并优于其他需要微调的基于扩散模型的图像编辑算法。

一句话总结:

发现 stable diffusion 模型本身具有解缠能力,提出一种简单、轻量的解缠算法,通过优化文本嵌入混合权重,无需对扩散模型进行微调,可以很好地推广到不同的图像,优于其他方法。

摘要:

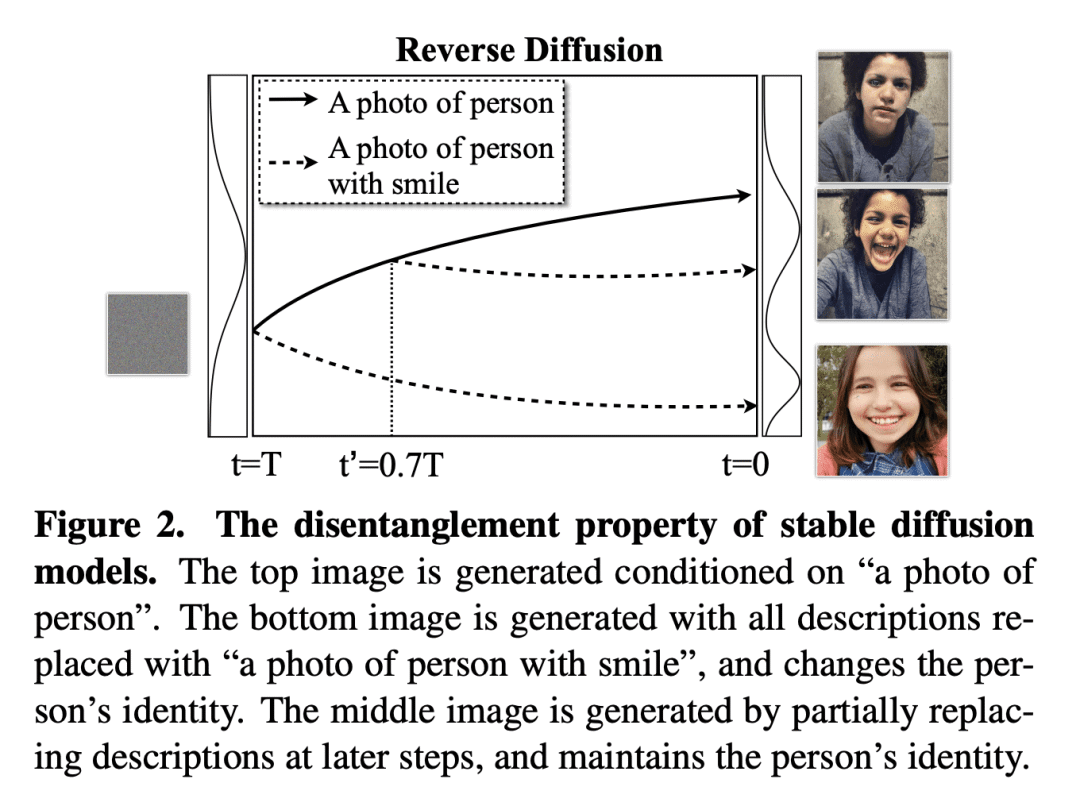

生成模型在计算机视觉中被广泛研究。最近,扩散模型因其生成的图像质量高而备受关注。图像生成模型的一个关键期望属性是能解缠不同的属性,这应该能够在不更改语义内容的情况下对样式进行修改,且修改参数应推广到不同的图像。之前的研究发现,生成对抗网络(GAN)本质上具有这种解缠能力,可以在不重新训练或微调网络的情况下执行解缠的图像编辑。本文探讨了扩散模型是否也具有这种能力。对于 stable diffusion 模型,通过将输入文本嵌入从中性描述(例如“人的照片”)部分更改为风格化的描述(例如,“微笑的人的照片”),同时修复去噪过程中引入的所有高斯随机噪声,生成的图像可以在不更改语义内容的情况下修改为目标样式。基于这一发现,本文进一步提出一种简单、轻量的图像编辑算法,其中针对样式匹配和内容保持对两个文本嵌入的混合权重进行了优化。整个过程只涉及优化大约50个参数,并且不会微调扩散模型本身。该方法可修改广泛的属性,其性能优于需要微调的基于扩散模型的图像编辑算法。优化的权重很好地推广到不同的图像。

Generative models have been widely studied in computer vision. Recently, diffusion models have drawn substantial attention due to the high quality of their generated images. A key desired property of image generative models is the ability to disentangle different attributes, which should enable modification towards a style without changing the semantic content, and the modification parameters should generalize to different images. Previous studies have found that generative adversarial networks (GANs) are inherently endowed with such disentanglement capability, so they can perform disentangled image editing without re-training or fine-tuning the network. In this work, we explore whether diffusion models are also inherently equipped with such a capability. Our finding is that for stable diffusion models, by partially changing the input text embedding from a neutral description (e.g., "a photo of person") to one with style (e.g., "a photo of person with smile") while fixing all the Gaussian random noises introduced during the denoising process, the generated images can be modified towards the target style without changing the semantic content. Based on this finding, we further propose a simple, light-weight image editing algorithm where the mixing weights of the two text embeddings are optimized for style matching and content preservation. This entire process only involves optimizing over around 50 parameters and does not fine-tune the diffusion model itself. Experiments show that the proposed method can modify a wide range of attributes, with the performance outperforming diffusion-model-based image-editing algorithms that require fine-tuning. The optimized weights generalize well to different images.

论文链接:https://arxiv.org/abs/2212.08698

内容中包含的图片若涉及版权问题,请及时与我们联系删除

评论

沙发等你来抢