来自今天的爱可可AI前沿推介

【LG] The Flan Collection: Designing Data and Methods for Effective Instruction Tuning

S Longpre, L Hou, T Vu, A Webson, H W Chung, Y Tay, D Zhou, Q V. Le, B Zoph, J Wei, A Roberts

[Google Research]

The Flan Collection: 面向有效指令微调的数据和方法设计

要点:

-

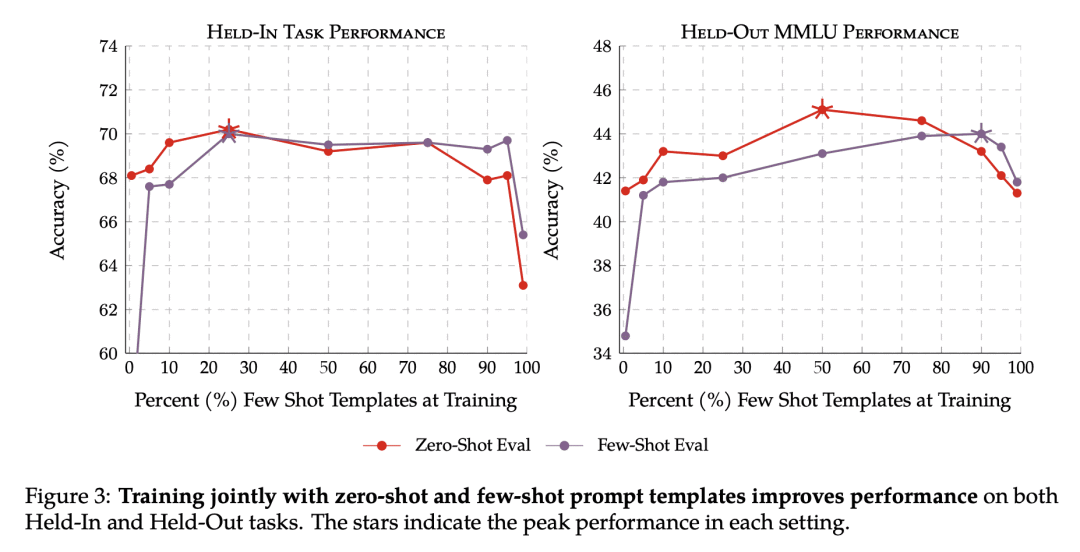

在这两种设置下,混合零样本提示和少样本提示的训练都能提高性能; -

有效的指令微调的关键技术,包括任务平衡、丰富性技术,以及混合提示设置训练; -

得到的Flan-T5比现有的开源指令微调提高3-17%

一句话总结:

Flan-T5是一种公开可用的指令微调方法,通过用混合提示设置和其他关键技术(如任务平衡和丰富性)来提高性能。

摘要:

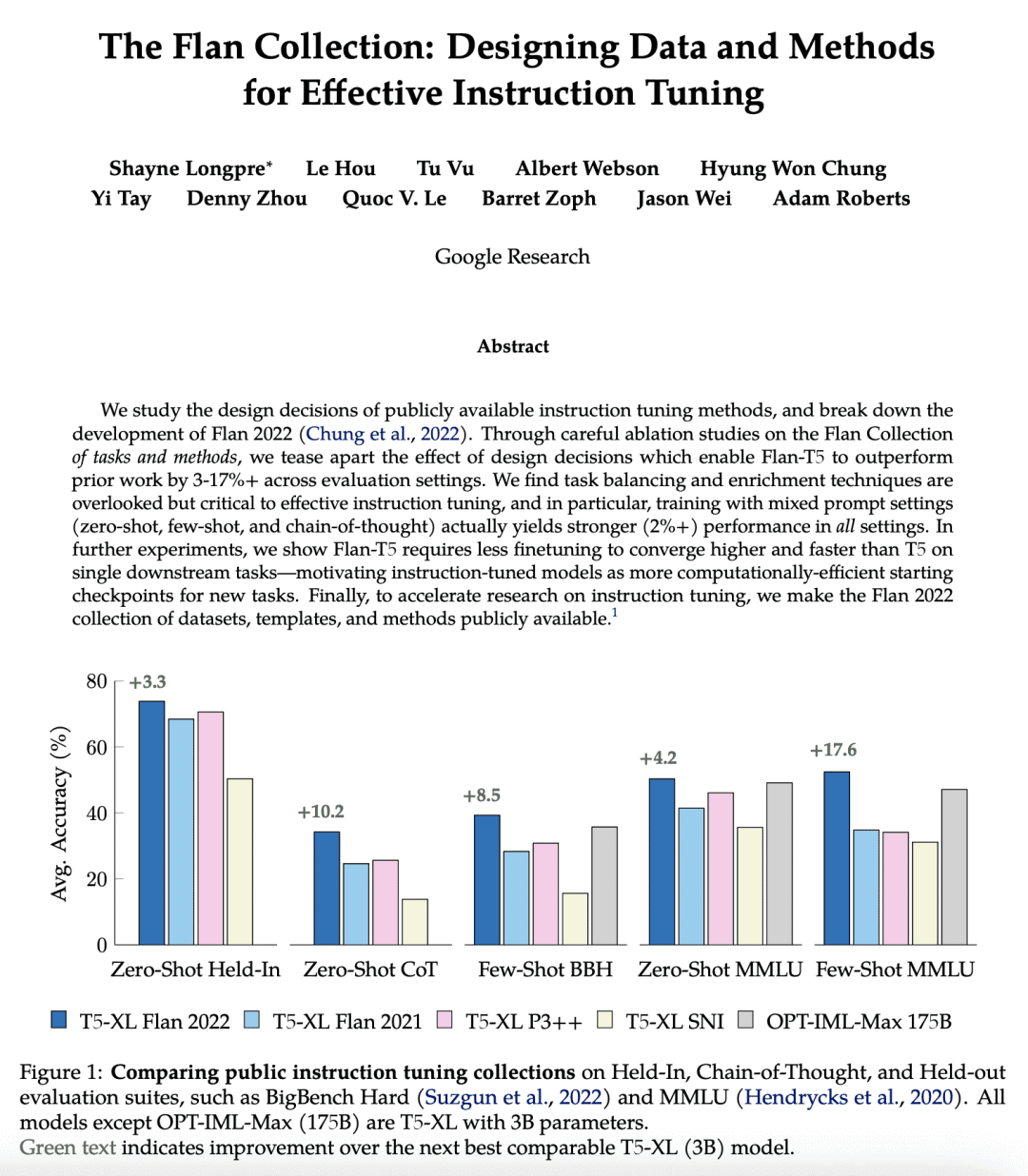

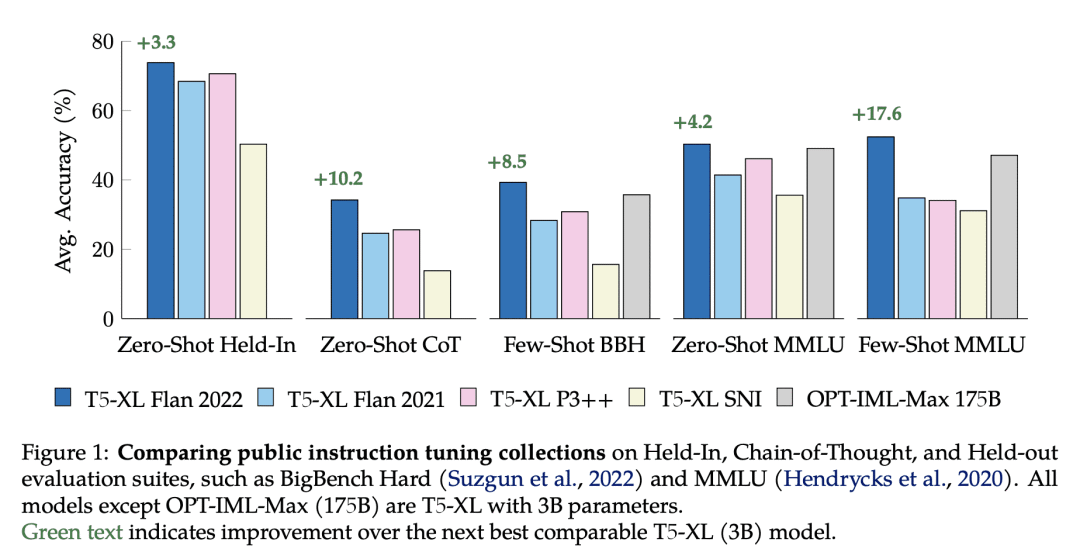

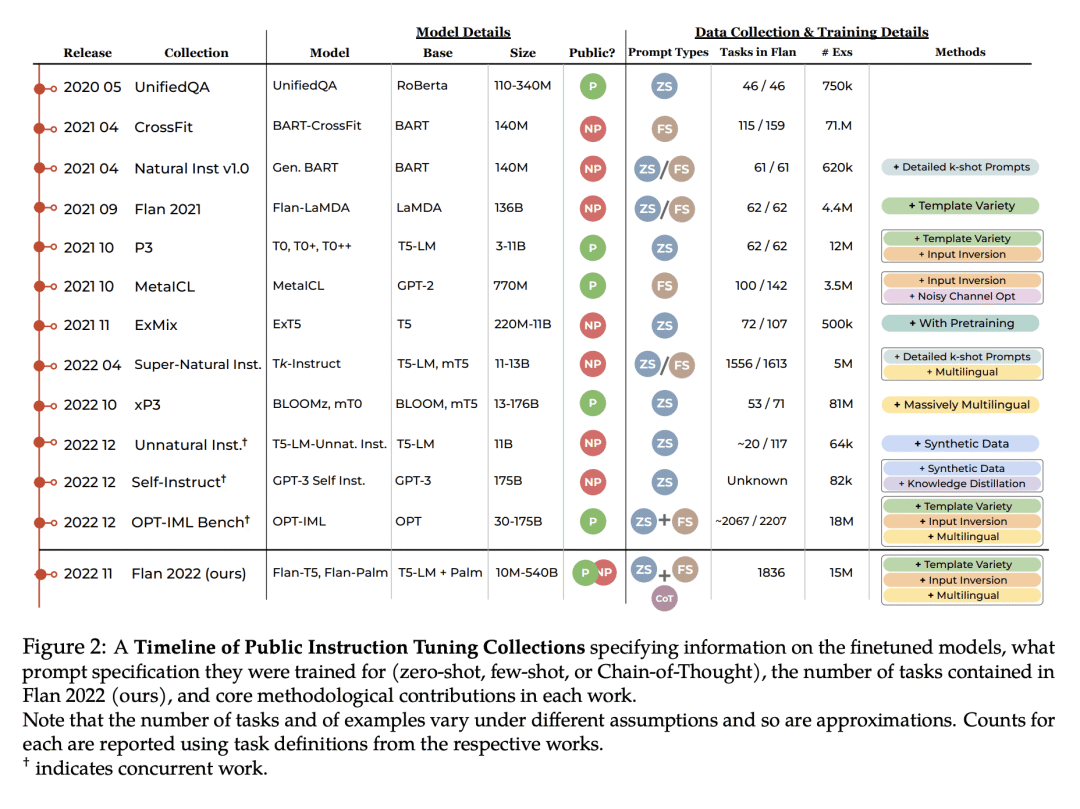

本文研究了公开可用的指令微调方法的设计决策,并对Flan 2022的方法进行了拆解。通过对Flan系列任务和方法的仔细消融研究,将设计决策的效果区分开来,这使得Flan-T5在不同的评估环境下比之前的工作要好3-17%以上。任务平衡和丰富性技术被忽视了,但对有效的指令微调至关重要,特别是在混合提示设置下的训练(零样本、少样本及思维链)实际上在所有设置中都能产生更强的性能(2%以上)。进一步的实验显示,Flan-T5需要更少的微调,可在单一的下游任务上比T5更高更快地收敛,使指令微调模型成为新任务的更计算高效的起点。

We study the design decisions of publicly available instruction tuning methods, and break down the development of Flan 2022 (Chung et al., 2022). Through careful ablation studies on the Flan Collection of tasks and methods, we tease apart the effect of design decisions which enable Flan-T5 to outperform prior work by 3-17%+ across evaluation settings. We find task balancing and enrichment techniques are overlooked but critical to effective instruction tuning, and in particular, training with mixed prompt settings (zero-shot, few-shot, and chain-of-thought) actually yields stronger (2%+) performance in all settings. In further experiments, we show Flan-T5 requires less finetuning to converge higher and faster than T5 on single downstream tasks, motivating instruction-tuned models as more computationally-efficient starting checkpoints for new tasks. Finally, to accelerate research on instruction tuning, we make the Flan 2022 collection of datasets, templates, and methods publicly available at this https URL.

论文链接:https://arxiv.org/abs/2301.13688

内容中包含的图片若涉及版权问题,请及时与我们联系删除

评论

沙发等你来抢