A S Pinto, A Kolesnikov, Y Shi, L Beyer, X Zhai

[Google Research]

用任务奖励微调计算机视觉模型

要点:

-

模型预测和预期使用之间的错位,可能不利于计算机视觉模型的部署; -

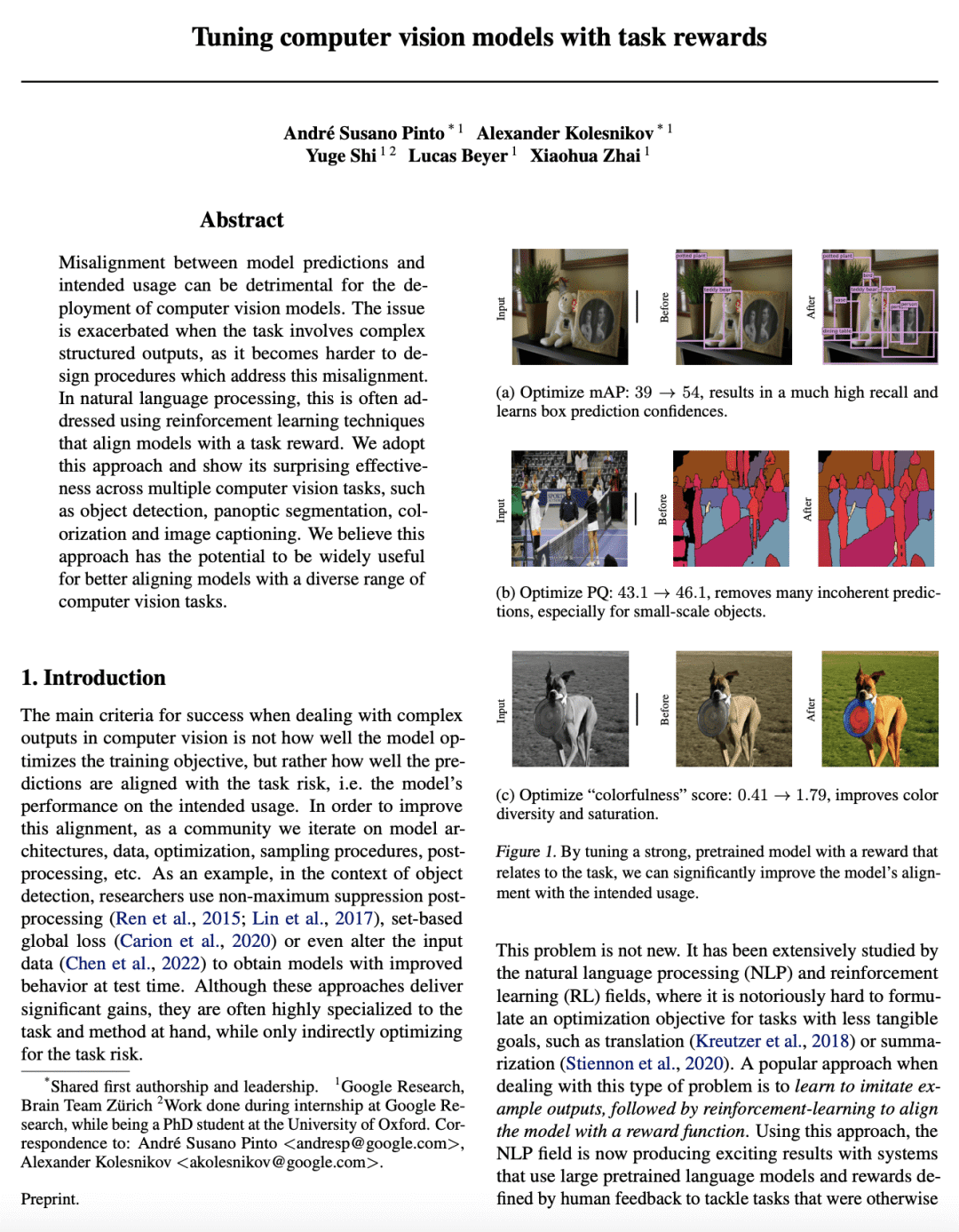

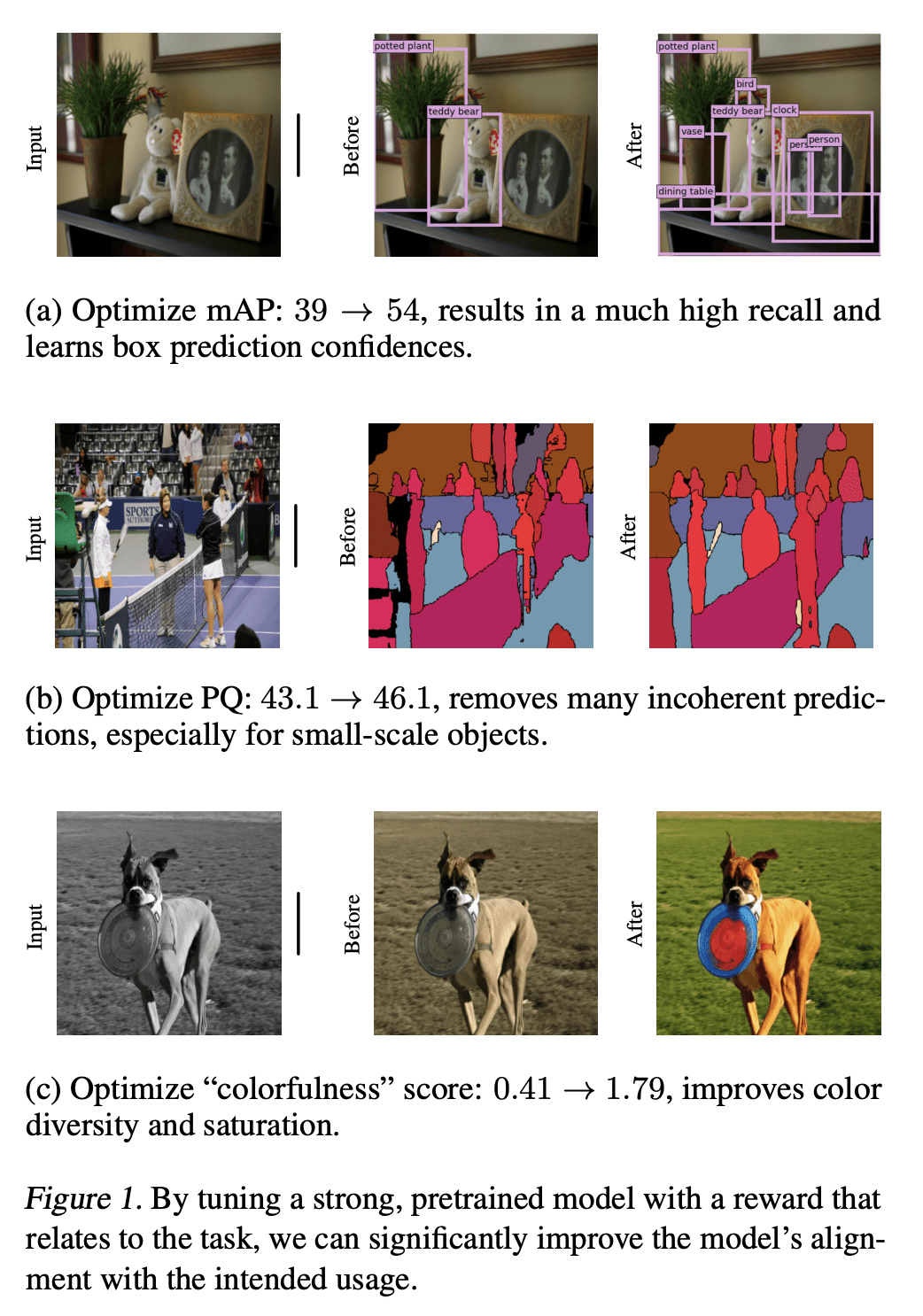

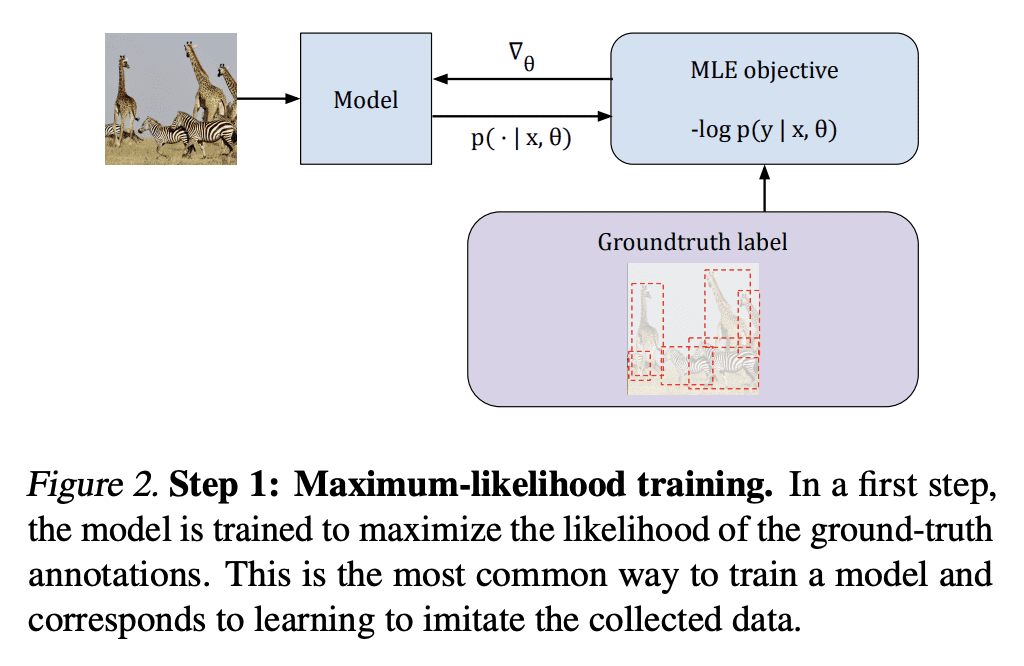

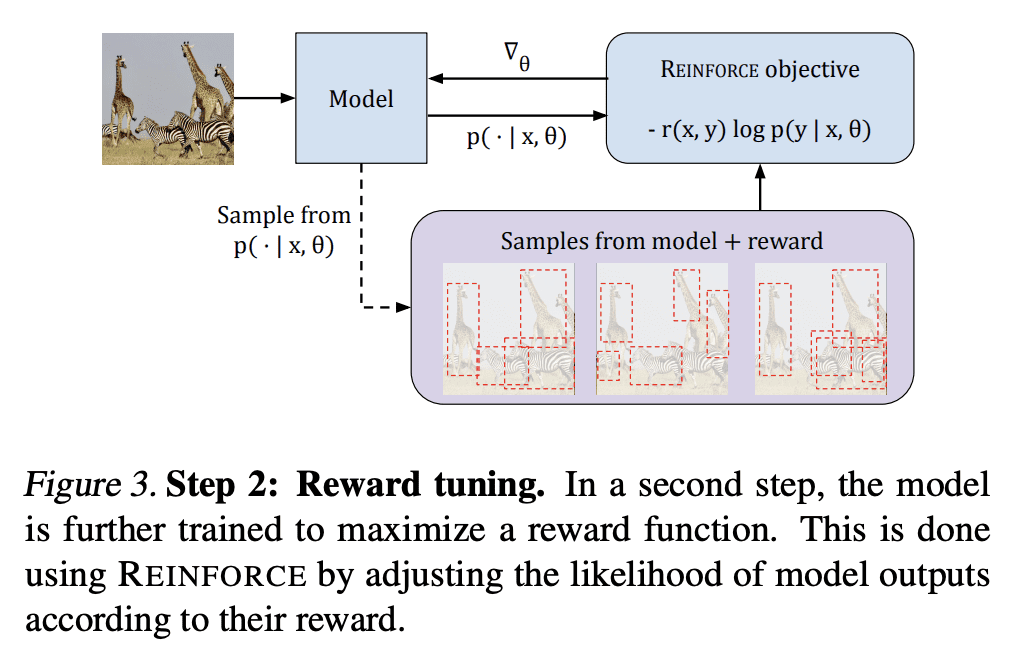

用强化学习技术的奖励优化,可以改善计算机视觉任务,如目标检测、全景分割、着色和图像描述,即使对于没有其他任务特定组件训练的模型也是如此; -

与最近在描述方面的工作具有竞争性,可以用来更精确地控制模型如何对齐非平凡任务风险; -

有可能广泛用于更好地将模型与不同的计算机视觉任务对齐。

一句话总结:

强化学习技术的奖励优化,是使计算机视觉模型与任务奖励对齐的有效方法,可用于改善广泛的计算机视觉任务,包括目标检测、全景分割、着色和图像描述。

Misalignment between model predictions and intended usage can be detrimental for the deployment of computer vision models. The issue is exacerbated when the task involves complex structured outputs, as it becomes harder to design procedures which address this misalignment. In natural language processing, this is often addressed using reinforcement learning techniques that align models with a task reward. We adopt this approach and show its surprising effectiveness across multiple computer vision tasks, such as object detection, panoptic segmentation, colorization and image captioning. We believe this approach has the potential to be widely useful for better aligning models with a diverse range of computer vision tasks.

论文:https://arxiv.org/abs/2302.08242

内容中包含的图片若涉及版权问题,请及时与我们联系删除

评论

沙发等你来抢