Decoupling Human and Camera Motion from Videos in the Wild

V Ye, G Pavlakos, J Malik, A Kanazawa

[UC Berkeley]

实际场景视频人体摄像机运动解耦

要点:

-

所提出的方法通过利用相对摄像机估计和学到的人体运动先验,在挑战性的实际场景视频中恢复人体全局 3D 轨迹; -

该方法是鲁棒的,在 Egobody 数据集上优于现有方法,并为有多人和挑战性的摄像机运动场景生成了可信的轨迹; -

摄像机轨迹优化与场景视差和现实世界人体轨迹的2D再投影相一致,能对复杂人体视频进行操作; -

恢复的摄像机比例允许在一个共享的坐标框架内推理多人运动,改善 PoseTrack 的下游跟踪性能。

一句话总结:

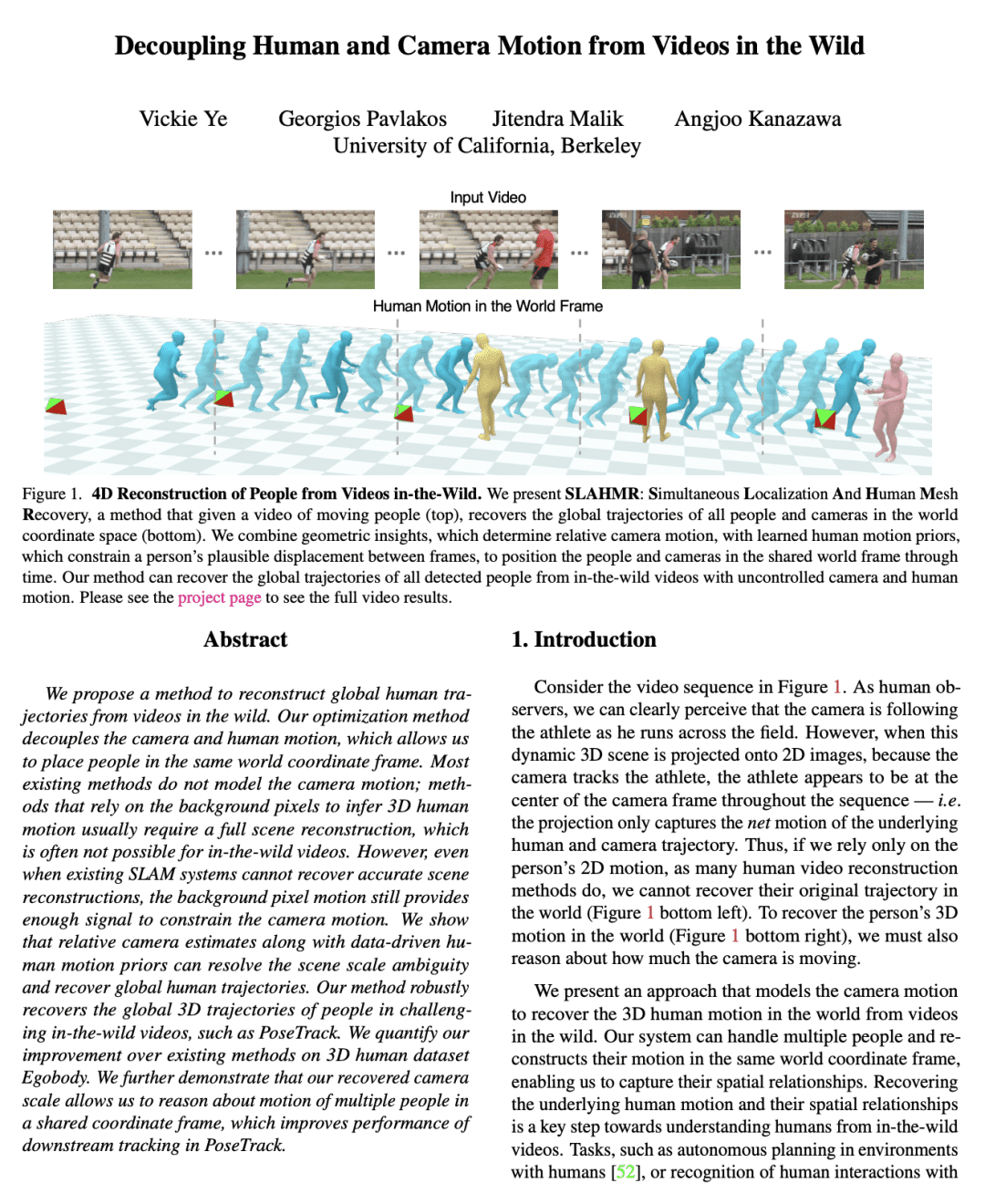

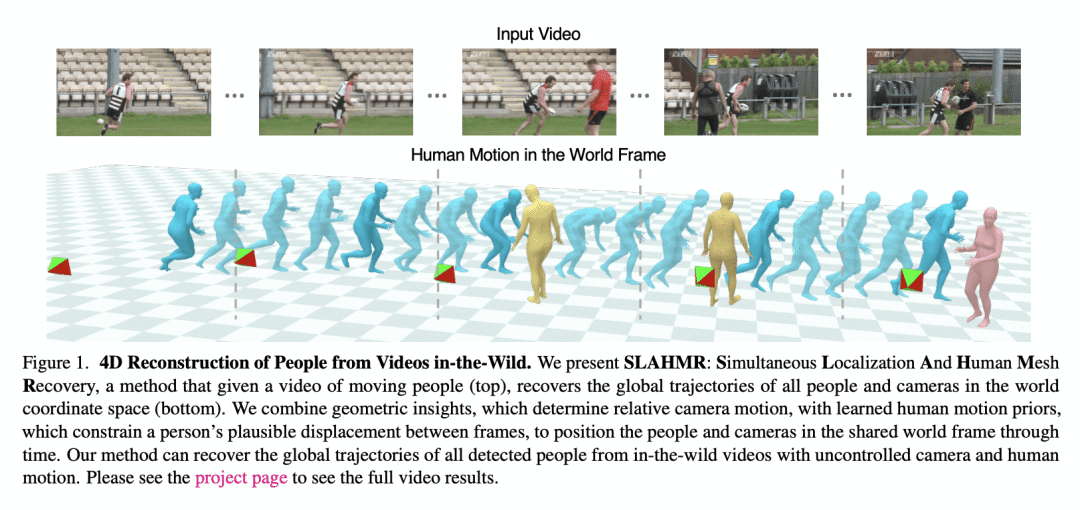

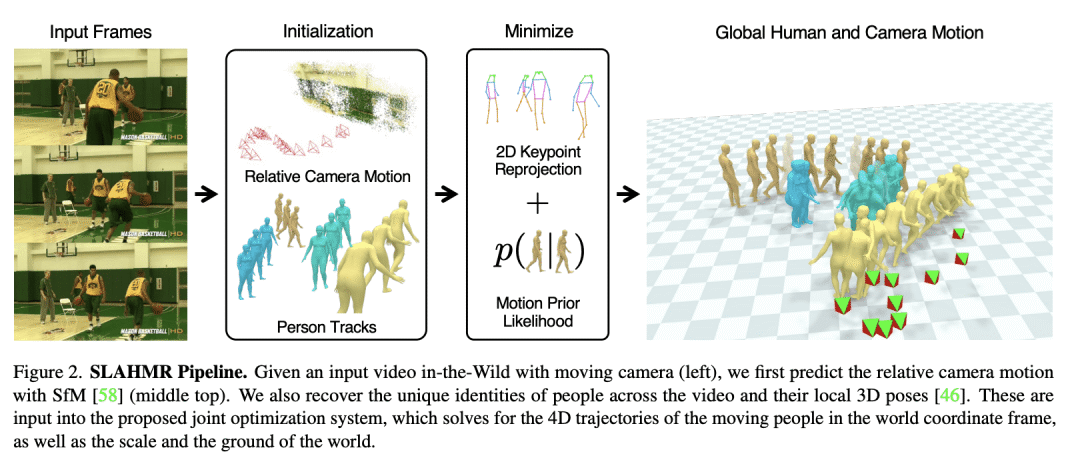

提出一种方法,通过对摄像机和人体运动进行解耦,利用相对摄像机估计和学到的人体运动先验,从移动摄像机的挑战性视频中恢复人在世界坐标帧中的运动轨迹。

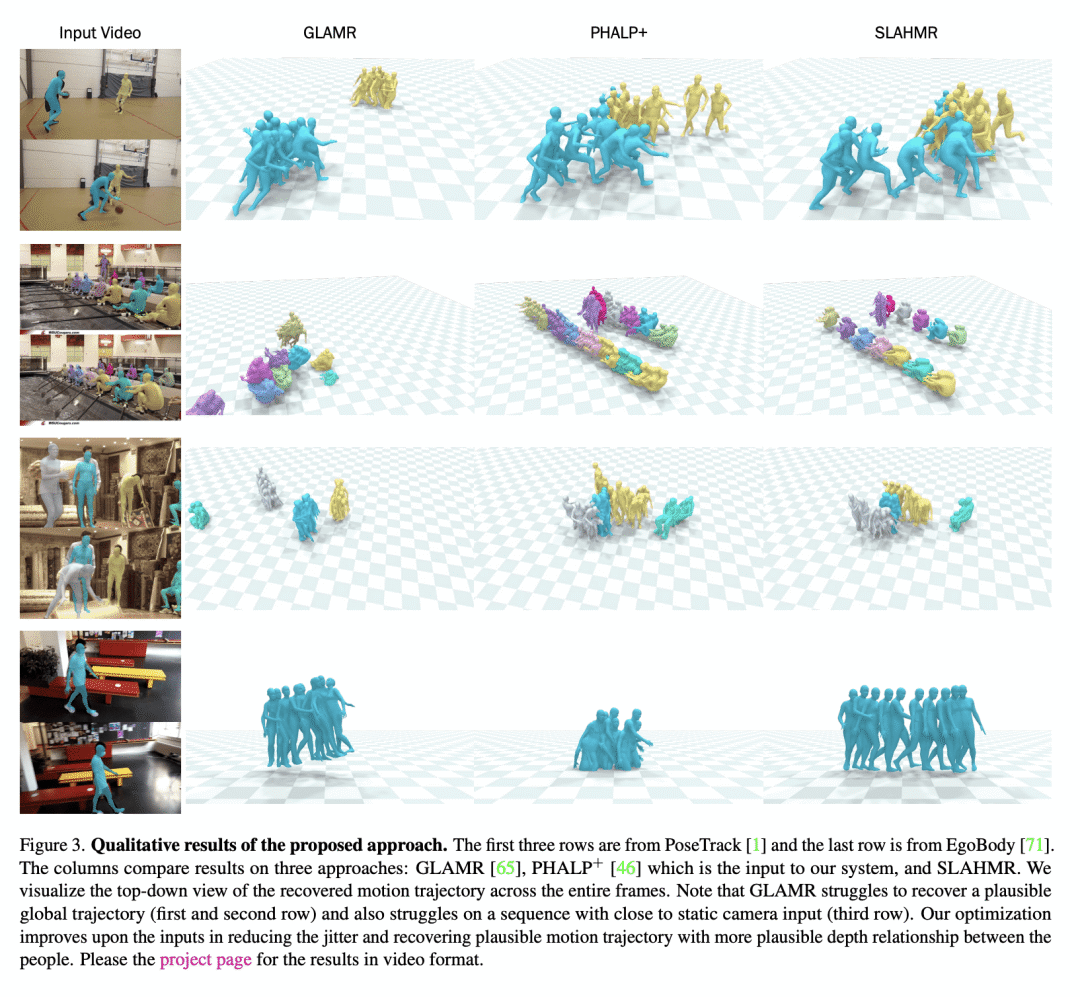

We propose a method to reconstruct global human trajectories from videos in the wild. Our optimization method decouples the camera and human motion, which allows us to place people in the same world coordinate frame. Most existing methods do not model the camera motion; methods that rely on the background pixels to infer 3D human motion usually require a full scene reconstruction, which is often not possible for in-the-wild videos. However, even when existing SLAM systems cannot recover accurate scene reconstructions, the background pixel motion still provides enough signal to constrain the camera motion. We show that relative camera estimates along with data-driven human motion priors can resolve the scene scale ambiguity and recover global human trajectories. Our method robustly recovers the global 3D trajectories of people in challenging in-the-wild videos, such as PoseTrack. We quantify our improvement over existing methods on 3D human dataset Egobody. We further demonstrate that our recovered camera scale allows us to reason about motion of multiple people in a shared coordinate frame, which improves performance of downstream tracking in PoseTrack. Code and video results can be found at this https URL.

论文链接:https://arxiv.org/abs/2302.12827

内容中包含的图片若涉及版权问题,请及时与我们联系删除

评论

沙发等你来抢