GLM-Dialog: Noise-tolerant Pre-training for Knowledge-grounded Dialogue Generation

Jing Zhang, Xiaokang Zhang, Daniel Zhang-Li, Jifan Yu, Zijun Yao, Zeyao Ma, Yiqi Xu, Haohua Wang, Xiaohan Zhang, Nianyi Lin, Sunrui Lu, Juanzi Li, Jie Tang

[Renmin University of China & Tsinghua University & Zhipu.AI]

GLM-Dialog:基于知识的对话生成的噪声容忍预训练

要点:

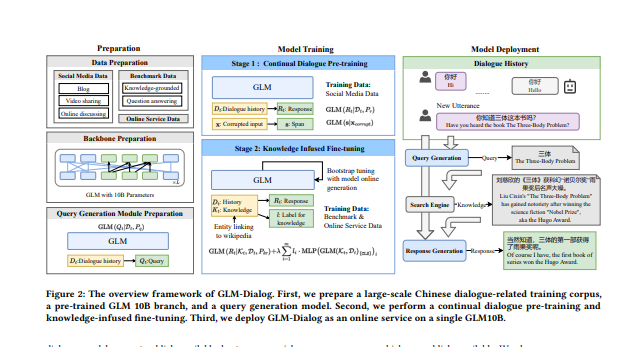

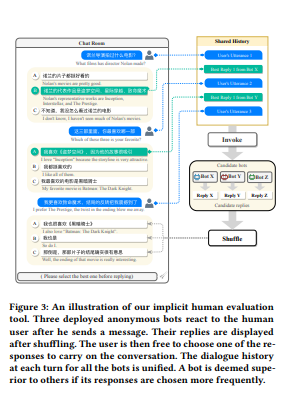

1.论文展示了 GLM-Dialog,这是一种具有 10B 参数的大规模语言模型 (LLM),能够使用搜索引擎访问互联网知识,以中文进行基于知识的对话。 GLM-Dialog 提供了一系列适用的技术来利用各种外部知识,包括有用的和嘈杂的知识,从而能够创建具有有限适当数据集的强大的基于知识的对话 LLM。 为了更公平地评估 GLM-Dialog,另外还提出了一种新的评估方法,允许人类同时与多个部署的机器人对话,并隐式比较它们的性能,而不是使用多维指标显式评级。

一句话总结:

从自动到人类角度的综合评估证明了 GLM-Dialog 与现有开源中文对话模型的比较。 我们发布了模型检查点和源代码,并将其部署为微信应用程序与用户进行交互。 我们提供在线评估平台,以促进开源模型和可靠对话评估系统的开发。 还发布了额外的易于使用的工具包,包括短文本实体链接、查询生成和有用的知识分类,以支持不同的应用程序。 所有源代码都可以在 Github 上找到。[机器翻译]

We present GLM-Dialog, a large-scale language model (LLM) with 10B parameters capable of knowledge-grounded conversation in Chinese using a search engine to access the Internet knowledge. GLM-Dialog offers a series of applicable techniques for exploiting various external knowledge including both helpful and noisy knowledge, enabling the creation of robust knowledge-grounded dialogue LLMs with limited proper datasets. To evaluate the GLM-Dialog more fairly, we also propose a novel evaluation method to allow humans to converse with multiple deployed bots simultaneously and compare their performance implicitly instead of explicitly rating using multidimensional metrics.Comprehensive evaluations from automatic to human perspective demonstrate the advantages of GLM-Dialog comparing with existing open source Chinese dialogue models. We release both the model checkpoint and source code, and also deploy it as a WeChat application to interact with users. We offer our evaluation platform online in an effort to prompt the development of open source models and reliable dialogue evaluation systems. The additional easy-to-use toolkit that consists of short text entity linking, query generation, and helpful knowledge classification is also released to enable diverse applications. All the source code is available on Github.

https://arxiv.org/pdf/2302.14401.pdf

内容中包含的图片若涉及版权问题,请及时与我们联系删除

评论

沙发等你来抢