国务院参事、清华大学苏世民书院院长、人工智能国际治理研究院院长、中国科技政策研究中心主任

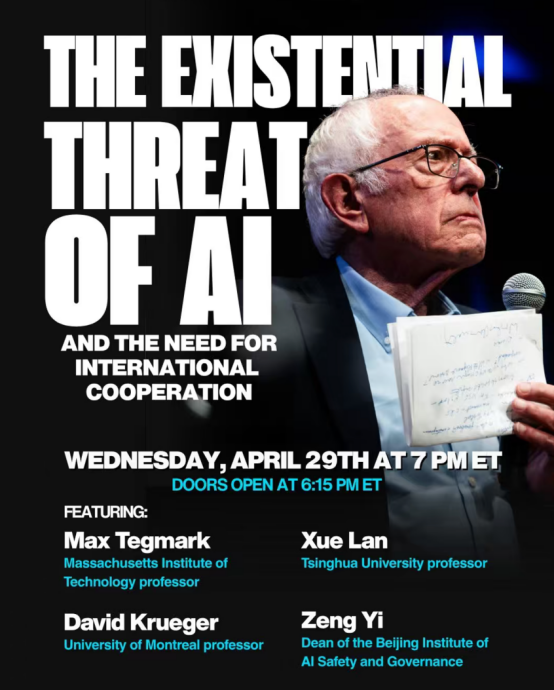

北京时间4月30日上午,美国参议员伯尼·桑德斯(Bernie Sanders)在美国国会山召开了一场题为“人工智能生存性风险与国际合作”的公开会议。会议由桑德斯主持,参会嘉宾包括:清华大学文科资深教授、人工智能国际治理研究院院长薛澜,麻省理工学院(MIT)物理学教授、未来生命研究所创始人迈克斯·泰格马克(Max Tegmark),北京前瞻人工智能安全与治理研究院院长曾毅,以及蒙特利尔大学助理教授戴维·克鲁格(David Krueger)。四位学者围绕超级智能发展前景、人工智能是否构成生存性风险、人工智能治理路径及国际合作等议题展开深入交流。

2026年4月30日听证会薛澜教授参与本次讨论的实录:

桑德斯参议员: 薛教授,国际社会曾携手合作,致力于防止核战争的爆发;也曾共同应对夺去数百万人生命的全球性大流行病。在您看来,国际社会是否已经形成合力,共同应对人工智能所带来的威胁?

薛澜: 感谢您,参议员先生。感谢您邀请我参加本次小组讨论。作为一名毕生致力于科技政策研究的学者,我将人工智能的发展视为一场深刻的变革性转型,我们必须竭尽所能,学会如何拥抱这一变革。因此,我非常珍视这次学习与交流的机会。

国际社会已做出努力,目前存在多种多边机制,例如:2023年在英国启动、今年在新德里举办的人工智能峰会;联合国框架下的多种机制,包括科学小组以及定于七月召开的多边对话;此外还有许多其他区域性和双边倡议。

然而,上述努力较为分散,未能达到应有的效果。造成这一局面的原因主要有以下几点:第一,人工智能风险本身具有高度不确定性。人们可能难以预见前方的风险,也可能无法理解其演化机制;第二,所谓“步调失调”问题。人工智能技术的演进速度远超治理机制的更新速度;第三,当前的地缘政治格局使主要人工智能大国难以凝聚共识,共同设计有效机制,为人工智能风险构建必要的“护栏”。

桑德斯参议员: 现在让我们谈谈可以采取哪些行动。薛教授,中国正在采取哪些措施来规范人工智能所带来的风险?

薛澜:中国的总体思路是在创新与安全之间寻求平衡,并以一种敏捷、适应性的方式加以推进。

首先,关于敏捷治理。 鉴于监管和政策的制定速度始终滞后于技术发展,必须放弃“一步到位、面面俱到”的执念,转而追求快速行动,即便某些方面仍存在漏洞,也可在后续加以完善。其次,政府与企业应摒弃“猫鼠游戏”式的博弈关系,转而携手合作,共同应对人工智能风险。第三,在敏捷治理中,除非涉及对公共利益的明显危害,否则应优先采用“轻推”(nudge)等柔性手段,避免动辄诉诸重罚。

其次,关于适应性治理。中国并未谋求一蹴而就地构建一套全面统一的治理框架,而是采取了“边干边学”的渐进式路径。具体而言:中国首先制定了一套治理原则,为各方提供指引;随后出台了若干基础性立法,为人工智能系统的运行提供法律框架,包括《个人信息保护法》、《数据安全法》、《网络安全法》等。与此同时,中国还针对人工智能领域的新进展相继出台了专项法规。例如,针对大语言模型的兴起,推出了《生成式人工智能服务管理暂行办法》。这些法规会随技术发展适时更新。中国企业也制定了安全实践方面的自愿承诺(例如,在2025年世界人工智能大会上,中国主要人工智能企业签署了最新版承诺书)。

综合以上各要素,中国已构建起一套多层次的人工智能风险治理体系。尽管该体系仍存在一定的不足与问题,但总体上已能够支撑中国人工智能的均衡发展。

桑德斯参议员: 薛教授,您在此前的一次演讲中曾将地缘政治竞争称为“房间里的大象,人们未必愿意正视的问题”。今天,就让我们来正面谈谈这个问题。中国、美国及其他国家如何才能携手合作,共同推动人工智能领域的国际合作?

薛澜:第一,我们必须纠正一个失实的叙事框架,即认为中美两国正在进行一场人工智能“竞赛”。目前,许多领先企业确实来自美国和中国,但颠覆性创新随时可能彻底改变这一格局。因此,完全有可能在若干年后,来自其他国家的企业和模型脱颖而出。从根本上说,真正的竞争是全球企业之间的竞争,比拼谁能在将社会风险降至最低的同时,提供性能更优越的模型与服务。

第二,在正视地缘政治竞争现实的前提下,我们应当寻找中美两国的共同利益所在,而为人工智能风险构建“护栏”正是这一共同利益的核心。一国若不安全,则无一国能独善其身。因此,人工智能安全是中美可以深入合作的领域,包括共同制定安全标准、研发安全技术、建立应急响应协议等。

第三,中美两国可以携手推动全球范围内的能力建设,致力于弥合“人工智能鸿沟”。试想这样一个世界:仅有少数国家和少数企业掌握着最强大的技术工具,而世界其他地区却一无所有、陷入贫困,这样的图景令人不寒而栗!因此,我认为中美两国有着共同的利益驱动,应当携手合作,共同弥合人工智能鸿沟。这不仅是为了发展中国家,也是为了我们自身。

Transcript of AI Scientist Panel (Q &A of Senator Sanders and Lan Xue)

Senator Sanders: Dr. Xue, the international community has come together to try and prevent nuclear war. The global community has come together to address pandemics that have killed millions of people. In your judgment, has the international community come together to address the threat posed by AI? Lan Xue: Thank you, Mr. Senator. I want to thank you for inviting me to this panel. As someone who has been a student of S&T policy for his whole life, I see AI’s development as a transformative change that we must try our best to learn how to embrace. So, I am grateful for having this opportunity to learn and to share with other colleagues. International community has been trying but so far, not enough and not very effective. there are various multilateral mechanisms such as AI summit meetings started in 2023 in UK and this year in Delhi; There are various UN mechanisms such as the scientific panel and the multilateral dialogue to be held in July; There are also many other regional and bilateral initiatives.But these efforts are fragmented and not as effective as they should be. There are several reasons for this situation—the first one is the uncertainty involved in AI risks—people may not be able to see the AI risks ahead and may not understand their behaviors. The second one is the so called pacing problem. AI technological change moves much faster than governance changes; The third one is that the geopolitical situation makes it hard for major AI countries to come together to design effective mechanism to build guiderail against AI risks. Senator Sanders: Let us now turn to what can be done. Dr. Xue, what is China doing to regulate the risks presented by AI? Lan Xue: China’s overall approach is trying to balance between innovation and safety. This is implemented through an agile and adaptive way. First of all, on Agile governance, given that regulation and policy are always slower than the technology, you have to give up the idea that you want to be accurate and comprehensive all the time, but try to act quickly even though you may still have some holes here and there. You can update later anyway. The second is for the government and companies to stop playing the game of cat and mouse, but try to work together to address AI risks. The third piece of Agile governance is to avoid using heavy punishment when nudge may work, except for clear danger to public interests. Second, on adaptive governance, China did not have to ambition to develop an overarching governance in a single stroke, but taking a learning by doing approach. First, China has developed a set of governance principles to provide guidelines; It later developed some foundational legislations that provided legal framework for AI system to operate in—including personal information protection law, data security law, and cybersecurity law and etc. China also came out various regulations in response to the new advances in AI. For example, in response to Large Language Models, China came up “Temporary Measures for the management of generative AI services”. These regulations are updated from time to time to adapt to the new technologies. Chinese companies have also developed voluntary commitments for safe practice (for example, in 2025 WAIC, leading Chinese AI companies signed up to an updated commitments).Adding all these elements together, China has built a multi-layered system to regulates AI risks, it still has weaknesses and problems, but it has been able to support China’s AI development in a balanced way.Senator Sanders: Dr. Xue, in a previous lecture, you previously called geopolitical rivalry “the big elephant in the room that people do not necessarily want to talk about.” Let’s talk about it. How can China, the US, and other countries come together to promote international cooperation around AI?Lan Xue: First, we have to change the inaccurate narrative that US and China are engaged in an AI race. At the moment, many leading companies are US and Chinese companies, but disruptive innovation can change the landscape entirely, so it is quite possible that years later there might be companies and models from other countries. So, the ultimate race is among global companies on who can provide better performing models and services while minimizing the risks to society.Second, mindful of the geopolitical rivalry, let’s find areas that US and China would have mutual interests in, which is guardrail against AI risks. If one country is not safe, all of us are not safe. So, AI safety is an area that US China can collaborate on-developing safety standards, safety technologies, protocols for emergencies, and so on.Third, US and China can work together to promote capacity building in global community aimed at reducing AI divide. It is unimaginable to think of a world with only a few countries and a few companies to have the most powerful tools but the rest of the world is impoverished with nothing. That kind of scenario is frightening! So, I think US and China should have common interest to work together to bridge the AI divide not only for the developing world, but also for ourselves.

内容中包含的图片若涉及版权问题,请及时与我们联系删除

评论

沙发等你来抢